Beneath the Surface: Experts Claim Google’s AI Report Skips Vital Safety Insights

Experts say Google’s latest AI model report lacks key safety details.

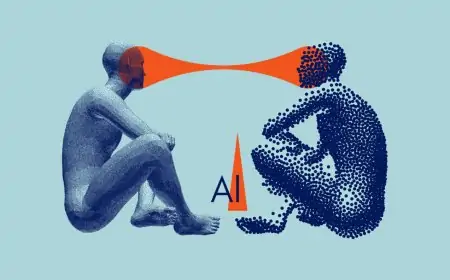

On Thursday, weeks after launching its most powerful AI model yet, Gemini 2.5 Pro, Google published a technical report showing the results of its internal safety evaluations. However, the report is light on the details, experts say, making it difficult to determine which risks the model might pose.

Technical Updates

Technical reports provide useful — and unflattering, at times — info that companies don’t always widely advertise about their AI. By and large, the AI community sees these reports as good-faith efforts to support independent research and safety evaluations.

Google takes a different safety reporting approach than some of its AI rivals, publishing technical reports only once it considers a model to have graduated from the “experimental” stage. The company also doesn’t include findings from all of its “dangerous capability” evaluations in these write-ups; it reserves those for a separate audit.

Thomas Woodside, co-founder of the Secure AI Project, said that while he’s glad Google released a report for Gemini 2.5 Pro, he’s not convinced of the company’s commitment to delivering timely supplemental safety evaluations. Woodside pointed out that the last time Google published the results of dangerous capability tests was in June 2024 — for a model announced in February that same year.

“I hope this is a promise from Google to start publishing more frequent updates,” Woodside told TechCrunch. “Those updates should include the results of evaluations for models that haven’t been publicly deployed yet, since those models could also pose serious risks.”

Google may have been one of the first AI labs to propose standardized reports for models, but it’s not the only one that’s been accused of underdelivering on transparency lately. Meta released a similarly skimpy safety evaluation of its new Llama 4 open models, and OpenAI opted not to publish any report for its GPT-4.1 series.

Safety Rails

Hanging over Google’s head are assurances the tech giant made to regulators to maintain a high standard of AI safety testing and reporting. Two years ago, Google told the U.S. government it would publish safety reports for all “significant” public AI models within scope. The company followed up on that promise with similar commitments to other countries, pledging to “provide public transparency” around AI products.

Kevin Bankston, a senior adviser on AI governance at the Center for Democracy and Technology, called the trend of sporadic and vague reports a “race to the bottom” on AI safety.

“Combined with reports that competing labs like OpenAI have shaved their safety testing time before release from months to days, this meager documentation for Google’s top AI model tells a troubling story of a race to the bottom on AI safety and transparency as companies rush their models to market,” he told TechCrunch.

Google has said in statements that, while not detailed in its technical reports, it conducts safety testing and “adversarial red teaming” for models ahead of release.